Why Innovation Breaks in Real Life (And How to Test It Properly)

Most innovations don’t fail because they don’t work.

They fail because they were never tested in the real world.

On paper, everything looks promising. The concept is solid. The pilot works. The demo convinces stakeholders.

And yet, once deployed in practice, things start to fall apart.

Not dramatically. Subtly.

Adoption slows. Misunderstandings appear. Workarounds emerge.

And suddenly, a “successful” innovation struggles to deliver real impact.

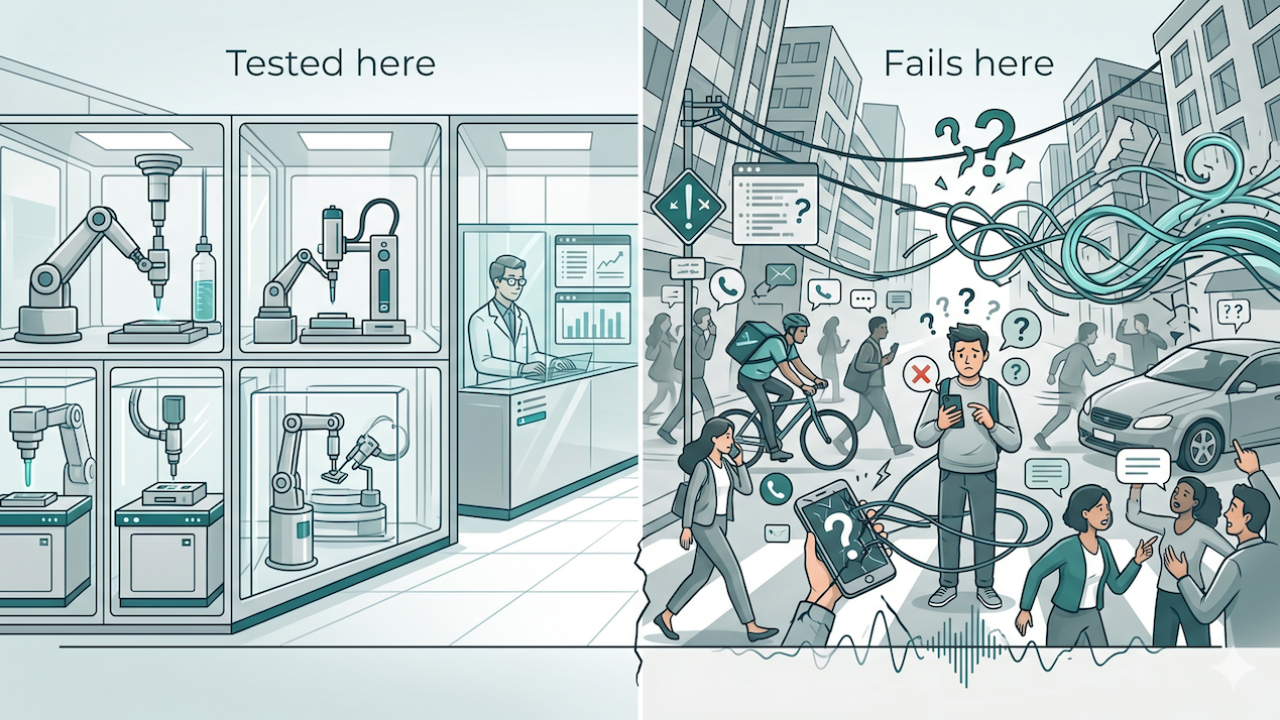

The illusion of the perfect test

In many innovation projects, testing happens in controlled environments, especially in innovation teams working under pressure to validate quickly.

A pilot is set up. A limited group of users is involved. Conditions are optimised to give the solution the best possible chance.

And in that context, things often work.

But there is a problem.

Real life is not controlled.

In reality:

- users are distracted

- contexts shift constantly

- assumptions differ between stakeholders

- constraints appear that were never considered

What works in a clean environment does not automatically survive in a messy one.

Where innovation starts to break in practice

The real test of an innovation does not happen during the demo.

It happens when:

- multiple stakeholders interact with it

- different interpretations collide

- priorities shift under pressure

This is where hidden complexity surfaces.

A feature that seemed obvious becomes ambiguous. A workflow that felt intuitive becomes unclear. A shared understanding turns out not to be shared at all.

Interestingly, many of these issues are not technical.

They are related to:

- interpretation

- communication

- expectations

And they often only appear when the system is pushed beyond ideal conditions.

Innovation happens at the edges

If you want to understand whether an innovation will hold, you need to look at where it might break.

Not in the average use case.

At the edges.

Edge cases are situations where:

- users behave differently than expected

- contexts are less predictable

- multiple variables interact at the same time

They are uncomfortable to test.

But they are incredibly valuable.

Because they reveal:

- hidden assumptions

- weak spots in the design

- gaps in alignment between stakeholders

This is also where many collaboration issues originate. Teams often assume alignment in standard scenarios, while real differences only surface under pressure, a pattern explored in common failure patterns.

The danger of clean success

One of the biggest risks in innovation is what you could call “clean success”.

A pilot works perfectly.

Stakeholders are convinced.

The project moves forward with confidence.

But that confidence is built on incomplete information.

Because the conditions of the pilot did not reflect reality.

This creates a false sense of security.

And the moment the solution enters a more complex environment, the gap becomes visible.

From validation to stress testing

This is where the mindset needs to shift.

From:

proving that something works

To:

understanding where and why it breaks

That means testing not just for success, but for limits.

In practice, this includes:

- introducing variability in users and contexts

- combining multiple use cases at once

- allowing ambiguity instead of removing it

- observing behaviour under time pressure

The goal is not to make the system fail.

The goal is to make its boundaries visible.

Why this matters for innovation teams

For innovation teams across complex innovation projects involving multiple stakeholders, this has direct implications.

If testing remains too clean:

- risks are discovered too late

- misalignment grows unnoticed

- adaptation becomes costly

But when edge cases are explored early:

- assumptions become explicit

- alignment improves

- solutions become more robust

This is not about slowing down innovation.

It is about preventing costly surprises later.

And ultimately, it is about increasing the chances that an innovation actually delivers results and growth.

If you want to explore how this perspective connects to a broader shift in innovation and collaboration, you can read the full story here:

👉 From Star Trek to AI: When Tools Finally Catch Up with How We Think

Three reflections for your own projects

If you are working on innovation, here are three questions worth considering:

1. Where are you testing too cleanly?

Are your pilots reflecting real-life complexity, or ideal conditions?

2. What assumptions remain unchallenged?

Which elements of your concept have not yet been tested under stress?

3. How does your solution behave at the edges?

What happens when users, contexts, and expectations start to diverge?

From working in theory to working in practice

The difference between a promising innovation and a successful one is rarely found in the core idea.

It is found in how well it holds up in reality.

And reality is not average.

It is messy. Dynamic. Unpredictable.

The earlier you embrace that, the stronger your innovation becomes.

Innovation does not fail in theory. It fails where reality begins.

Closing reflection

Take a moment to look at your current projects.

Where are you still validating success in ideal conditions?

And what would change if you deliberately explored where things might break?

Not to prove failure.

But to build something that actually works in practice.

If you recognise these patterns in your own projects and want to work on them, you can reach out via the contact page. I am glad to share ideas and examples.